HPC-based Hand Gesture Dataset Generation for Detection & Tracking

In AR, it is necessary to manage virtual 3D objects in space. In real life, we use our hands which have 6 DoF and fingers for fine movements. The need for touchless interaction creates an opportunity to take advantage of hand movements and gestures.

The challenge is how to address the opportunity of hand tracking on existing computational devices with low power consumption and 0 latency. The goal of this HPC experiment is to design and develop a software library based on Machine Learning techniques to perform dynamic detection and recognition of hand gestures without interruption.

SECTOR: Manufacturing

TECHNOLOGY USED: HPC, AI, Machine Learning

COUNTRY: Italy

The challenge

Hand tracking and gesture recognition are critical components of AR (Augmented Reality) technology as they allow users to interact with virtual objects in a natural and intuitive way. However, to make hand tracking and gesture recognition truly seamless, there are two main challenges that need to be addressed. One of the primary challenges is latency reduction. AR experiences should be smooth and responsive, so any delay in hand tracking or gesture recognition can significantly impact the user experience. Another challenge is the accuracy and robustness of detecting hand gestures in different scenarios in the shortest time.

Most similar solutions are hardware-dependant and standard products are calibrated towards the detection of one hand at a time.

Currently on the market are either predefined numbers of recognized gestures or expensive and time-consuming custom solutions for training non-defined gestures upon request.

The solution

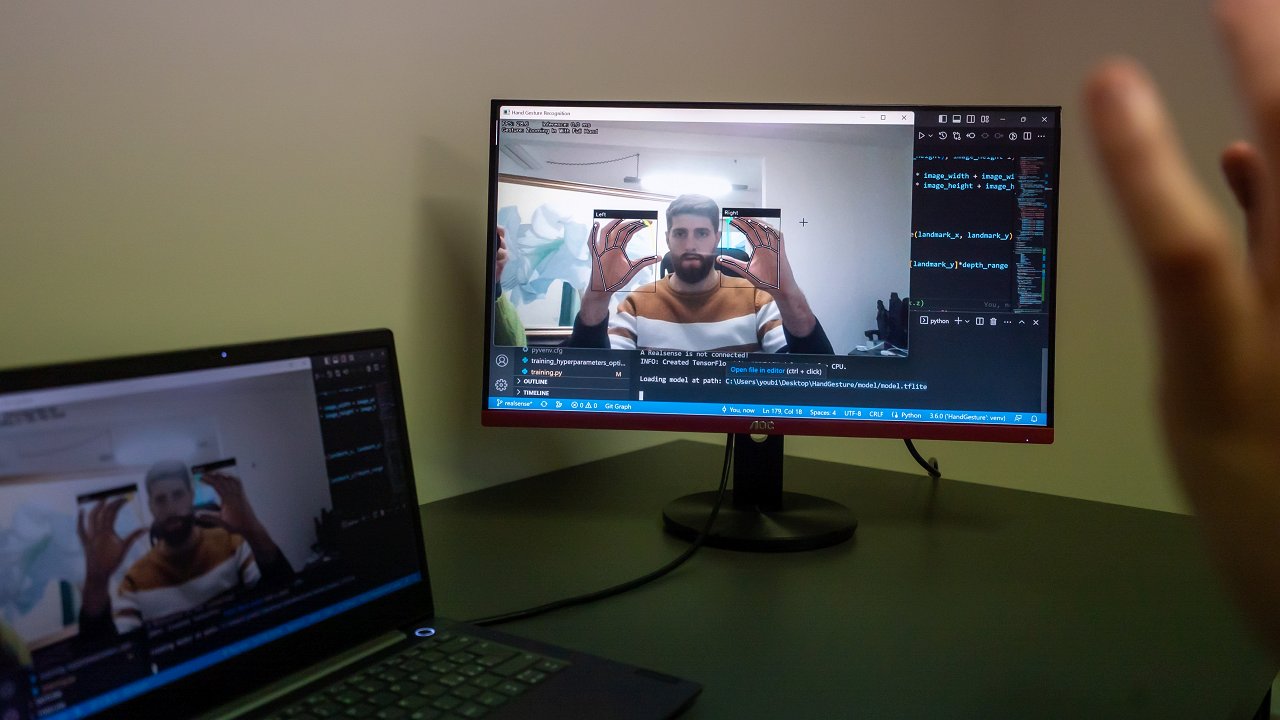

The solution developed in the experiment, called “HandyTrack”, focuses on the application of high-performance computing (HPC) to the field of computer vision and machine learning. Its main goal is to improve the performance of hand gesture detection and tracking algorithms by using HPC to train deep learning models and generate large datasets based on 3D models. The use of HPC allows our solution to reduce the time required for training algorithms and generating larger, more diverse datasets. This leads to improved accuracy and robustness of hand gesture detection and tracking algorithms.

HandyTrack is an HW agnostic software framework which provides unlimited dynamic gesture recognition that is calibrated for the detection of both hands at the same time.

The competitive advantage relies on the synthetic data augmentation framework that processes unlimited dynamic gesture recognition.

Business impact, Social impact, Environmental impact

This experiment will have a strong business impact on the profitability of a software library for hand gesture recognition and tracking based on dataset and training produced with HPC. It offers a higher level of accuracy in hand gesture detection in less time, which realizes an improvement on the state of the art represented by the main competitors. The overall result will be embedded both in a standalone software library bundled in wearable electronic devices and in a high-performance cloud-based application.

Moreover, reducing the time required for training and generating more realistic and diverse datasets gives HandyTrack the potential to significantly advance the field of touchless interaction and make hand gesture-based interfaces a reality. The designed solution has a human-centered vision and consequent social impact increasing inclusivity and diversity. For example, the generation of unlimited customized hand gesture datasets can be used to support people with disabilities in their interactions with non-disabled persons and machines as in the case of people who are deaf mute.

Benefits

- Improved training time: significant reduction of training time for neural networks by up to 80% with HPC compared to traditional computing resources. This can result in faster development and deployment of hand gesture recognition systems.

- Reduced costs: significantly reduced cost of generating custom hand gestures by 99.6 % with HPC. This can make it easier for companies and individuals to create and train custom hand gestures.

- Natural user experience: with the ability to switch from a static hand gesture model to a dynamic hand gesture model, AR devices can provide a more natural user experience.

- Enhanced capability: HPC can allow for the creation of an unlimited hand gesture dataset, which can be used to address previously untapped domains and market segments.

Organizations involved:

End User: Youbiquo srl

HPC Provider: CINECA

Domain Expert: BI-REX